Ingest CSV data into system tables

This guide demonstrates how to ingest CSV data files into Deephaven system tables, covering both command-line and UI-driven methods. For command-line operations, these instructions assume a typical Deephaven installation.

Note

If you want to read a CSV file directly into an in-memory Deephaven table for interactive analysis, see the Core guide on reading CSV data into tables.

Schema preparation

Before ingesting any CSV data, whether via the command line or the UI, a schema defining the data's structure must be generated and deployed. This schema is crucial for Deephaven to correctly interpret and store your data.

Generate a Schema

The first step is to generate a schema file from a sample of your CSV data. This schema will define column names, data types, and other ingestion parameters.

See the Schema inference page for a detailed guide on generating a schema from a sample CSV file.

Deploy the Schema

Once you have a schema file (e.g., /tmp/MyNamespace.MyTable.schema), you need to deploy it. The dhconfig schema import command makes this schema definition known to your Deephaven instance.

Replace /tmp/MyNamespace.MyTable.schema with the actual path to your generated schema file.

Command-line import with iris_exec csv_import

With a schema prepared and deployed, you can ingest CSV data using the iris_exec csv_import command. This tool is part of the Deephaven installation and is typically found in /usr/illumon/latest/bin/.

CSV ingestion jobs are performed directly from the command line, using the iris_exec tool.

General syntax

The iris_exec csv_import command follows this general structure. Arguments before the -- (double dash) are launch arguments for the iris_exec utility itself, while arguments after the -- are specific to the csv_import operation.

It's common to run this command as the dbmerge user, especially when writing to Deephaven's data directories.

Example command

This example demonstrates ingesting a CSV file named trades.csv located in /data/incoming/ into the TradeData table within the MarketOps namespace. The data is targeted for the 2024-07-15 partition, managed under the prod_importer internal partition name.

CSV importer arguments

The CSV importer takes the following arguments:

| Argument | Description |

|---|---|

Destination Specification:

| Specifies the target location for the imported data. One of the following methods must be used:

|

-ns or --namespace <namespace> | (Required) Namespace in which to find the target table. |

-tn or --tableName <name> | (Required) Name of the target table. |

-fd or --delimiter <delimiter character> | Field Delimiter (Optional). Allows specification of a character other than the file format default as the field delimiter. If delimiter is specified, fileFormat is ignored. This must be a single character. |

-ff or --fileFormat <format name> | (Optional) The Apache Commons CSV parser is used to parse the file itself. Five common formats are supported:

|

-om or --outputMode <import behavior> | (Optional):

|

-rc or --relaxedChecking <TRUE or FALSE> | (Optional) Defaults to FALSE. If TRUE, will allow target columns that are missing from the source CSV to remain null, and will allow import to continue when data conversions fail. In most cases, this should be set to TRUE only when developing the import process for a new data source. If TRUE, conversion failures for Boolean columns will be treated as FALSE if the string value is not recognized as TRUE. |

Source Specification:

| Defines the source CSV file(s). If none of these are provided, the system attempts a multi-file import. Options include:

|

-sn or --sourceName <ImportSource name> | Specific ImportSource to use. If not specified, the importer will use the first ImportSource block that it finds that matches the type of the import (CSV/XML/JSON/JDBC). |

-tr | Similar to the TRIM file format, but adds leading/trailing whitespace trimming to any format. So, for a comma-delimited file with extra whitespace, -ff TRIM would be sufficient, but for a file using something other than a comma as its delimiter, the -tr option would be used in addition to -ff or -fd. |

-cv or --constantColumnValue <constant column value> | A literal value to use for the import column with sourceType="CONSTANT", if the destination schema requires it. |

Warning

After completing a CSV import, you must run a rescan command for the imported data to become available in Deephaven. The Data Import Server (DIS) will not automatically detect the new data.

Run this command to rescan a specific table:

For example, to rescan the TradeData table in the MarketOps namespace:

See the Data control tool rescan documentation for more details.

Handling Boolean Values

One special case when ingesting CSV data is columns in the target table with a type of Boolean.

- By default, the CSV importer will attempt to interpret string data from the source table with

0,F,f, or any case offalse, being written as a Booleanfalse. - Similarly,

1,T,t, or any case oftruewould be a Booleantrue. other value results in a conversion failure. This failure may be continuable if a default value is set for the column or if therelaxedCheckingargument isTRUE. (IfrelaxedCheckingisTRUEand the conversion is for a Boolean column, unrecognized values are treated asFALSE, as noted in the argument's description.) - To convert from other representations of true/false (e.g., from foreign language strings), a formula or transform would be needed, along with a

sourceType="String"to ensure the reading of the data from the CSV field is handled correctly.

CSV Import Query

Note

Ensure you have completed the Schema preparation steps before proceeding with a UI ingest.

Note

At this time, CSV Import Queries are only available in the Legacy Engine. Select a Legacy worker to configure this query type.

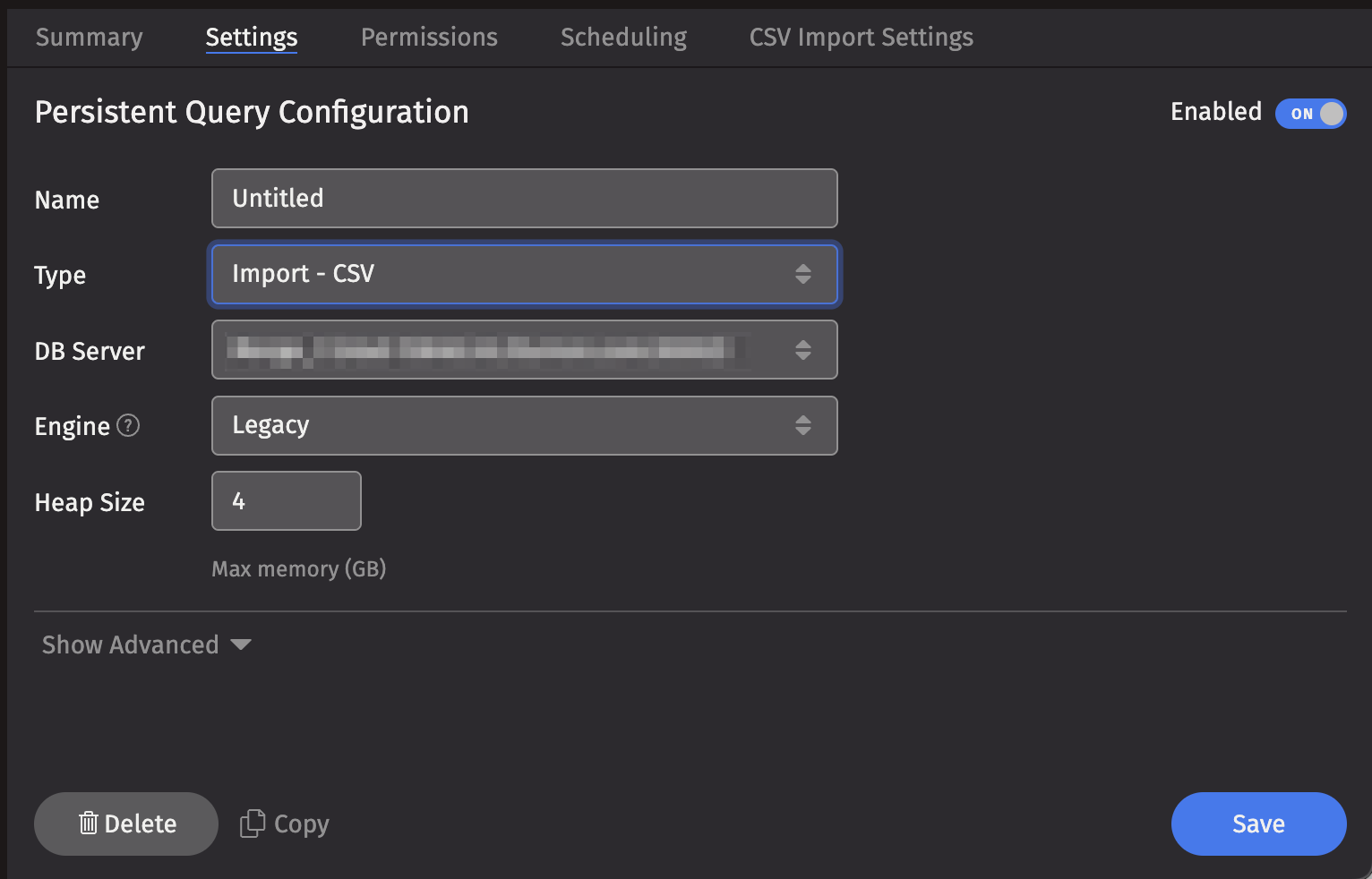

To create a CSV Import Query, click the +New button above the Query List in the Query Monitor and select the type Import - CSV.

- Select a DB Server , choose an Engine (Core+ or Legacy), and enter the desired value for Memory (Heap) Usage (GB).

- Options available in the Show Advanced Options section of the panel are typically not used when ingesting or merging data.

- The Permissions tab presents a panel with the same options as all other configuration types, and gives the query owner the ability to authorize Admin and Viewer Groups for this query.

- Click the Scheduling tab to access a panel with the same scheduling options as all other configuration types.

- Click the CSV Import Settings tab to access a panel with the options pertaining to ingesting a CSV file:

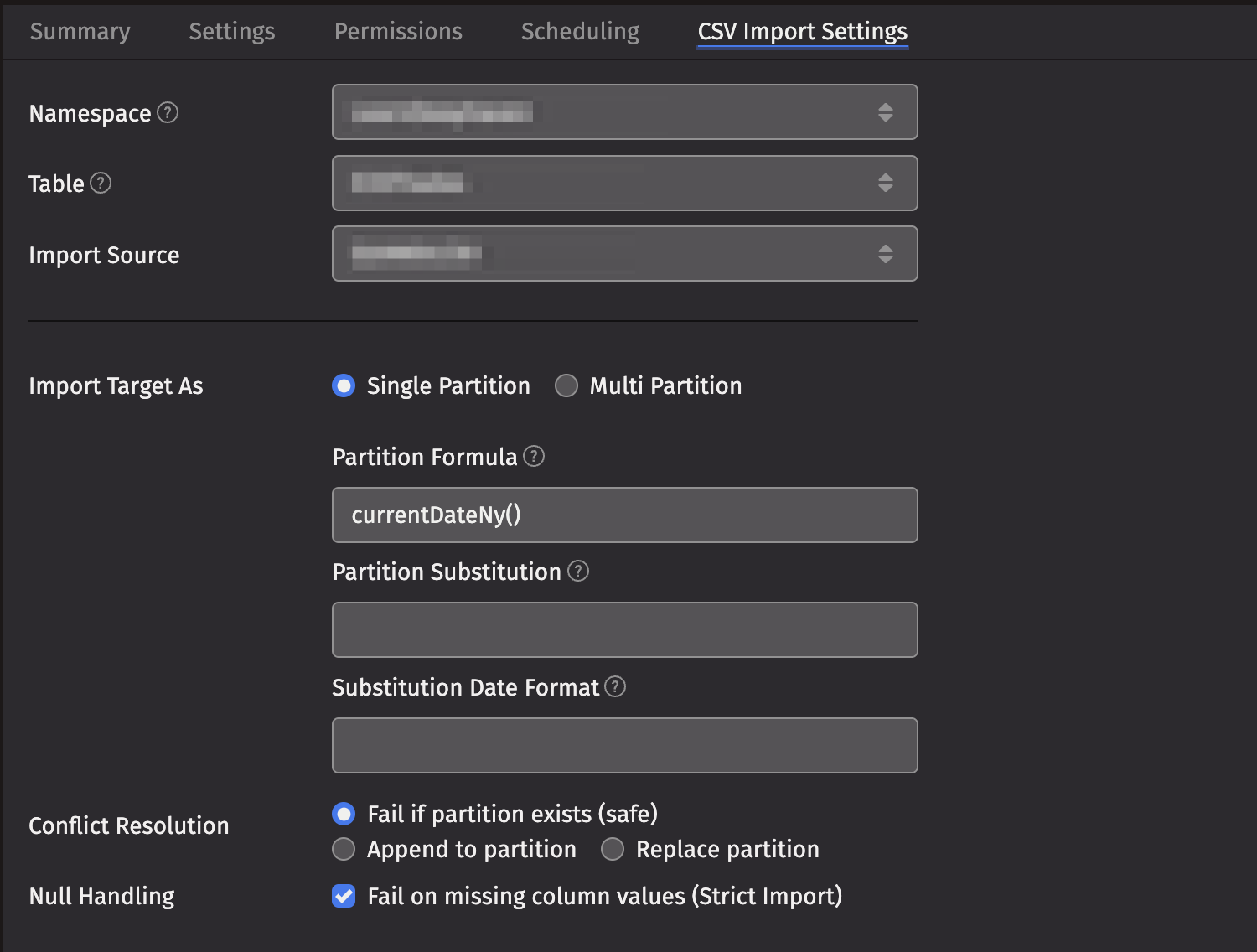

CSV Import Settings

- Namespace: This is the namespace into which you want to import the file.

- Table: This is the table into which you want to import the data.

- Import Source: This is the import source section of the associated schema file that specifies how source data columns will be set as Deephaven columns.

- Strict Import will fail if a file being imported has missing column values (nulls), unless those columns allow or replace the null values using a default attribute in the

ImportColumndefinition.

- Strict Import will fail if a file being imported has missing column values (nulls), unless those columns allow or replace the null values using a default attribute in the

- Output Mode: This determines what happens if data is found in the fully-specified partition for the data. The fully-specified partition includes both the internal partition (unique for the import job) and the column partition (usually the date).

- Safe - The import job will fail if existing data is found in the fully-specified partition.

- Append - Data will be appended to it if existing data is found in the fully-specified partition.

- Replace - Data will be replaced if existing data is found in the fully-specified partition. This does not replace all data for a column partition value, just the data in the fully-specified partition.

- Single/Multi Partition: This controls the import mode. In single-partition, all of the data is imported into a single Intraday partition. In multi-partition mode, you must specify a column in the source data that will control to which partition each row is imported.

- Single-partition configuration:

- Partition Formula: This is the formula needed to partition the CSV being imported. If a specific partition value is used it will need to be surrounded by quotes. For example:

currentDateNy()"2017-01-01" - Partition Substitution: This is a token used to substitute the determined column partition value in the source directory, source file, or source glob, to allow the dynamic determination of these fields. For example, if the partition substitution is "PARTITION_SUB", and the source directory includes "PARTITION_SUB" in its value, that PARTITION_SUB will be replaced with the partition value determined from the partition formula.

- Substitution Date Format: This is the date format that will be used when a Partition Substitution is used. The standard Deephaven date partition format is

yyyy-MM-dd(e.g., 2018-05-30), but this allows substitution in another format. For example, if the filename includes the date inyyyyddMMformat instead (e.g., 20183005), that could be used in the Date Substitution Format field. All the patterns from the JavaDateTimeFormatterclass are allowed.

- Partition Formula: This is the formula needed to partition the CSV being imported. If a specific partition value is used it will need to be surrounded by quotes. For example:

- Multi-partition configuration:

- Import Partition Column: This is the name of the database column used to choose the target partition for each row (typically "Date"). There must be a corresponding Import Column present in the schema, which will indicate how to get this value from the source data.

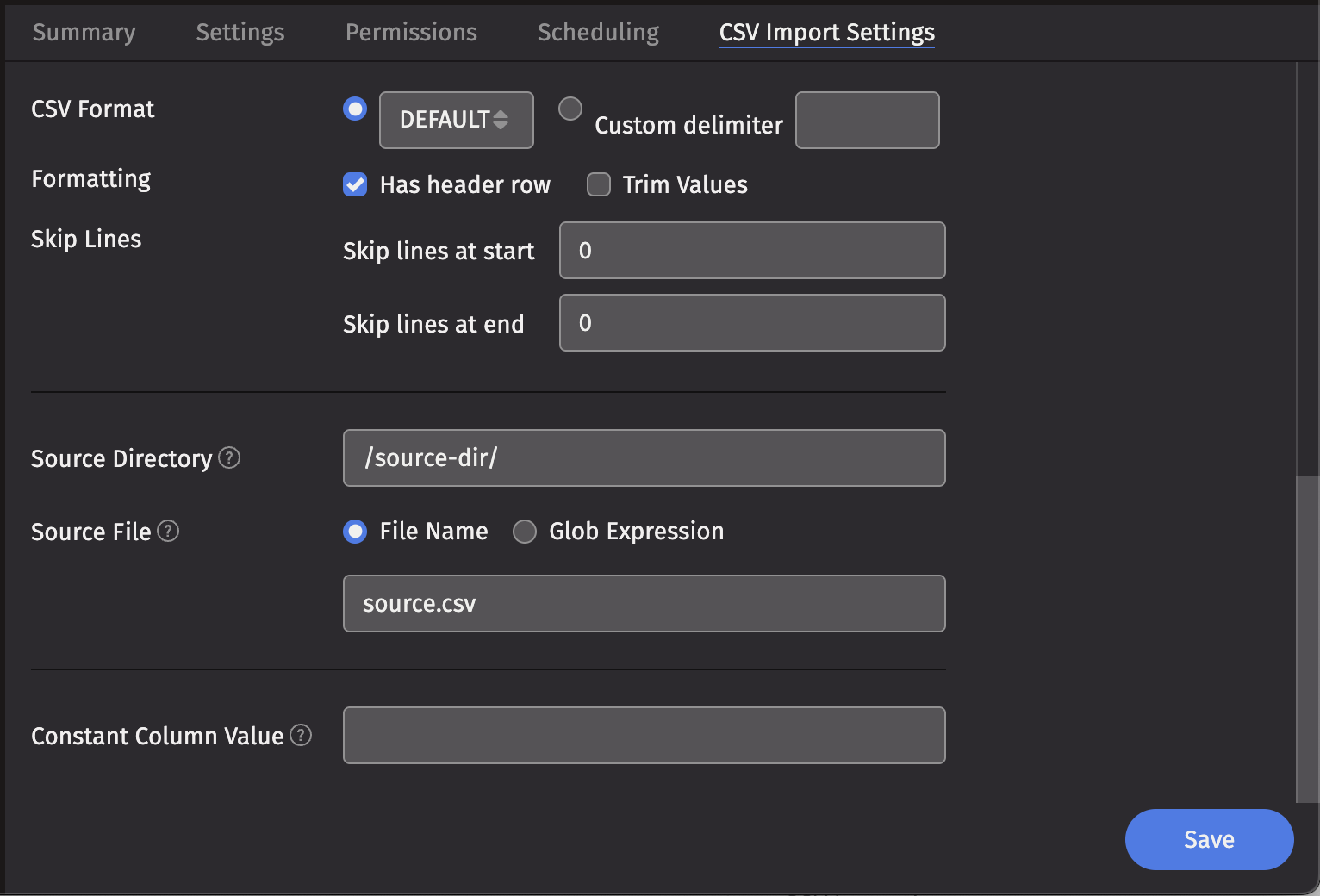

- File Format: This is the format of the data in the CSV file being imported. Options include

DEFAULT,TRIM,EXCEL,TDF,MYSQL,RFC4180, andBPIPE*. - Delimiter: This can be used to specify a custom delimiter character if something other than a comma is used in the file.

- Skip Lines: This is the number of lines to skip before beginning parse (before header line, if any).

- Skip Footer Lines: This is the number of footer lines to skip at the end of the file.

- Source Directory: This is the path to where the CSV file is stored on the server on which the query will run.

- Source File: This is the name of the CSV file to import.

- Source Glob: This is an expression used to match multiple CSV file names.

- Constant Value: A String of data to make available as a pseudo-column to fields using the CONSTANT sourceType.

- Note: BPIPE is the format used for Bloomberg's Data License product.

Warning

After completing a CSV Import Query, you must run a rescan command for the imported data to become available in Deephaven. The Data Import Server (DIS) will not automatically detect the new data.

Run this command to rescan a specific table:

See the Data control tool rescan documentation for more details.