The farm-to-table concept in modern dining provides increased food safety, flavor, and freshness through increased traceability. In dining, knowing where your food comes from not only improves its flavor, but your confidence in its quality as well. The same can be said about your data. Extracting straight from the database simplifies workflows. Then, with the power of Deephaven, transform and load your data with ease. So, why not go directly from database-to-table?

Deephaven has added SQL to its list of supported data sources. Go directly from database-to-table and see how easy it is.

SQL is, has been, and will be the most popular database management language for the forseeable future. Deephaven is a query engine that excels at handling real-time data in conjunction with static volumes at scale. Bringing them together is like when your favorite food combination comes together, and the ingredients are sourced from a local producer you trust. Storage solutions like SQL and Parquet are well-trusted by their users.

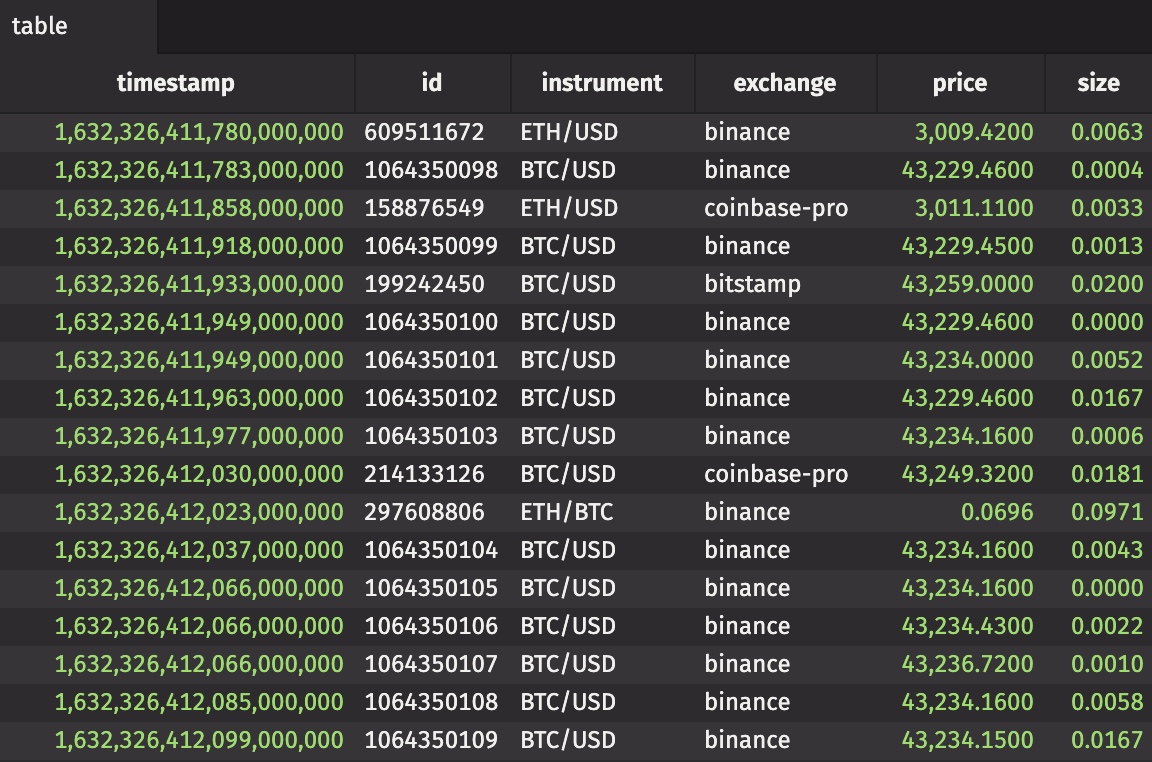

In this blog, you'll see how to ingest your data with SQL and read the results directly into a table via Deephaven's Python API. In Community Core, you can manipulate and modify your data in numerous ways.

Prerequisites

If you have deephaven-core running with v0.22 or later, and you have access to a SQL DB, then there's only a couple of things to do before you can run as many SQL queries as your heart desires.

Install the necessary drivers

Database access from Python requires usage of drivers contained within external packages. Thus, you'll need to install the package for the driver you wish to use. The read_sql method can use the following three drivers to execute queries:

-

- To use connectorx, install connectorx.

-

- To use ODBC, install turbodbc.

-

- For a generic entrypoint to ADBC drivers, install adbc-driver-manager.

- For Arrow Flight SQL database access, install adbc-driver-flightsql.

- For PostgreSQL database access, install adbc-driver-postgresql.

- For SQLite database access, install adbc-driver-sqlite.

With the necessary driver installed, it's time to get querying!

Execute a query

The read_sql method takes three input arguments:

- A connection

- A query

- A driver

In this example, my connection is a string that specifies a Postgres DB. My query is just a SQL query in a string, and my driver is connectorx. (Note that Deephaven supports the sources and destinations that ConnectorX can support.) I store my username and password in environment variables.

That's all there is to it! With the data in memory, transform it with Deephaven.

Try it

Deephaven has you covered, no matter what storage solution you prefer. Get started with our tutorial.

Questions? Comments? Join us on Slack.