Opening Day is here. Somewhere around the third inning, the questions start piling up — who's sitting on 99 tonight? Why is that pitcher's spin rate down from last season? Can Ohtani actually hit that high slider?

The data exists. Statcast tracks every pitch, every swing, every batted ball in granular detail. But turning a question into an answer usually means writing queries, joining tables, and knowing which of the 90-something columns you actually need. Most fans and even many analysts don't make it that far.

This post shows a different path: load Statcast data into Deephaven, connect Claude to it via MCP, and ask questions in plain English. The agent reads the schema, writes the query, executes it, and returns the answer. You stay focused on the baseball.

"Who throws the fastest fastball in the AL East?" — That's the whole query. The AI handles the rest.

What you'll need

Two things before you start:

- Claude Desktop (or Cursor or Windsurf) — any AI tool with MCP support works. This post uses Claude Desktop.

- About 15 minutes — that's the realistic setup time start to finish.

The architecture is simple: Deephaven holds and queries the data. Claude figures out what to query and how to express it. MCP is the bridge between the two. We'll set these up in order.

Part 1: Get Deephaven running

Note

The code in this post runs in a Deephaven console. If you don't have Deephaven running yet, see the Quickstart guide — Docker or pip, under five minutes. Once it's running, install the extra dependency inside your environment:

Open http://localhost:10000 to confirm Deephaven is running. You'll see the browser-based console where you'll run the Python code in this post.

Part 2: Load the data

Open the Deephaven IDE at http://localhost:10000 and run the following in a new console. This pulls every pitch from the first week of the 2026 season:

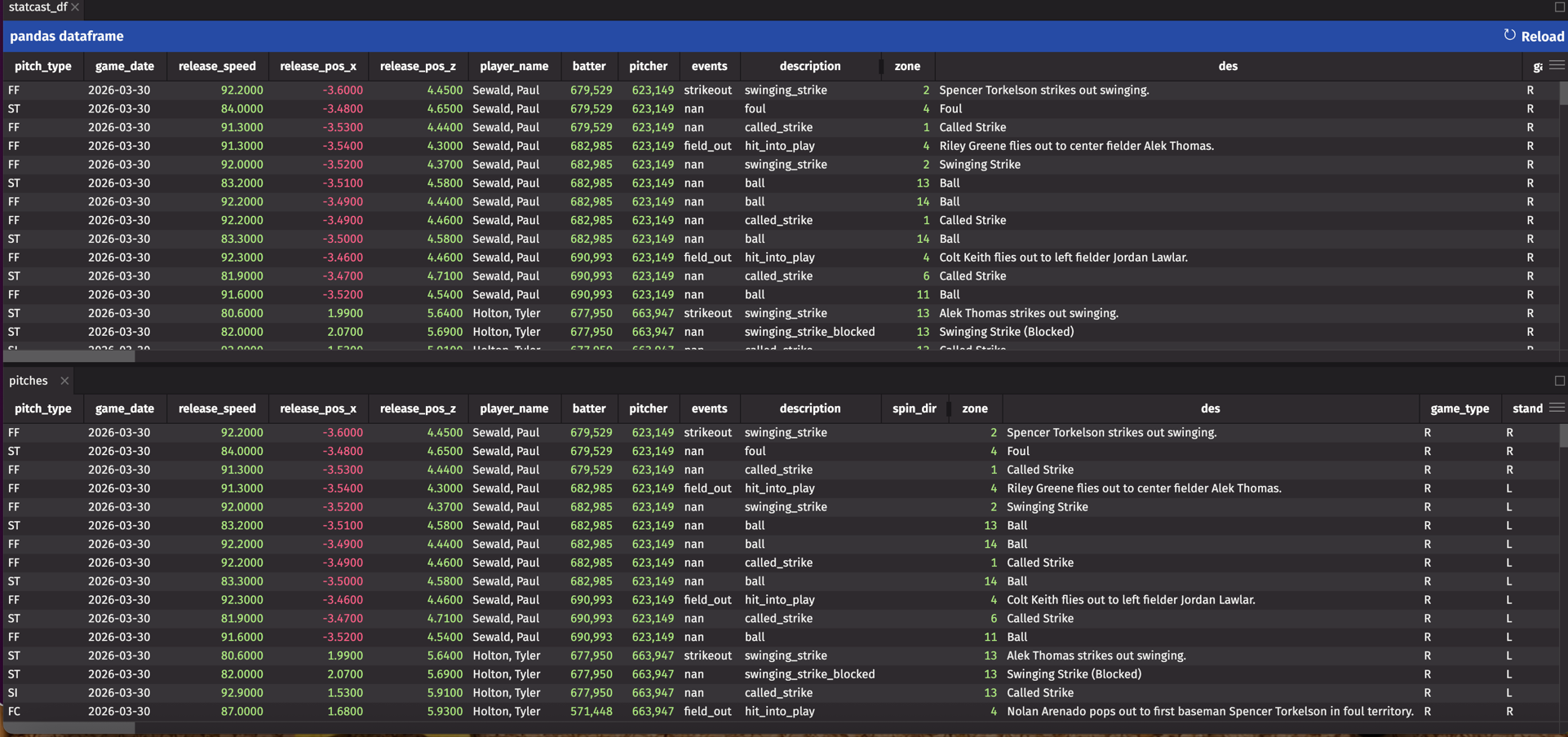

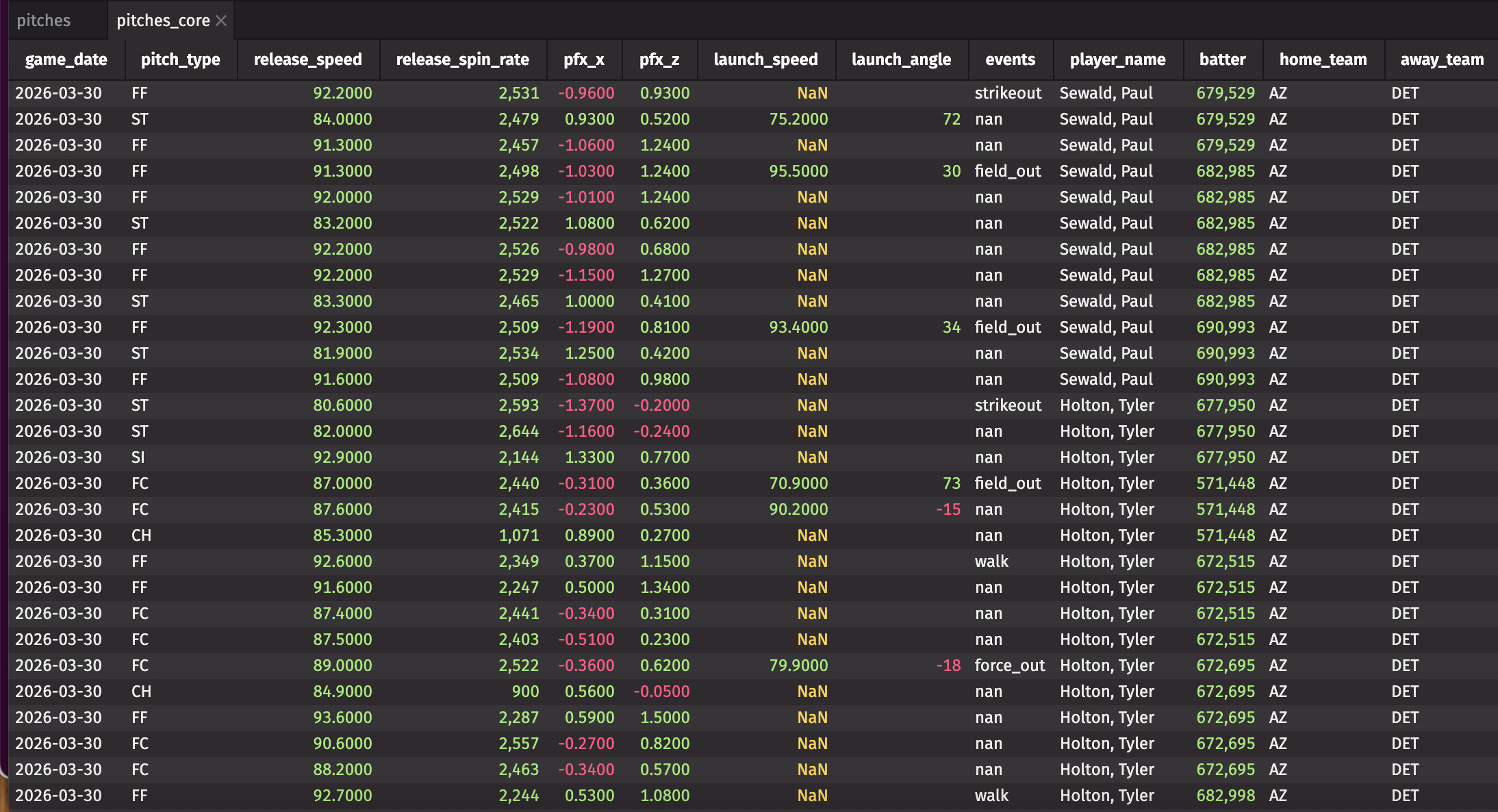

pitches is now a Deephaven table with 90+ columns per pitch: velocity, spin rate, movement, launch angle, exit velocity, pitch location, game state, and more. Working with all 90 columns at once is unwieldy, so create a focused view of the ones you'll actually query:

view creates a lightweight reference without copying data — good for exploration when the source dataset is large.

Adding division context

The raw Statcast data has home_team and away_team but no division information. To answer questions like "which AL East pitchers throw hardest," you need to derive the pitching team and join it against a reference table. Build that reference now, in the same Deephaven console:

Teams reference table

With the reference table built, derive the pitching team and join:

Now the AI can answer division-specific questions without you spelling out join logic every time you ask one.

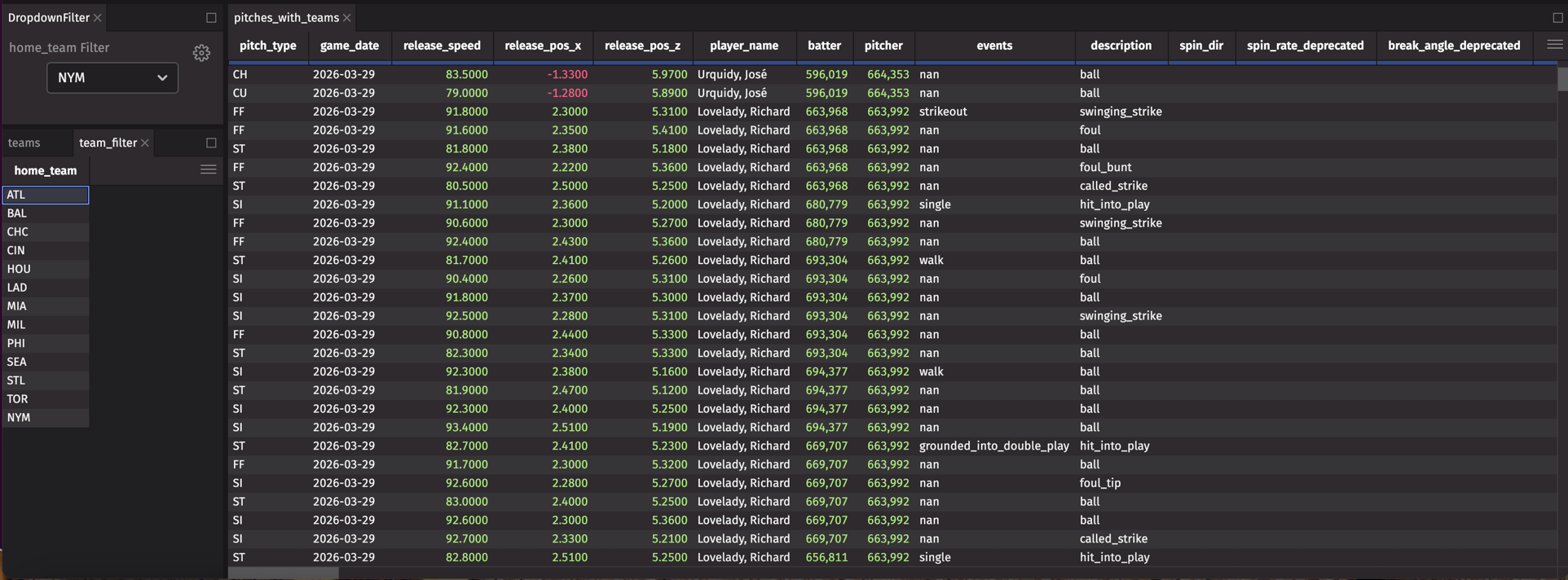

Filtering by team in the UI

For quick team-specific exploration, create a dropdown filter source:

Open Controls > Dropdown Filter in the Deephaven IDE, set home_team as both the source and filter column, and any table with a home_team column filters to your chosen team instantly.

Part 3: Connect Claude

The data is loaded and structured. Now connect Claude Desktop so it can query that data.

Install Deephaven MCP

Install the MCP package:

This is the MCP server that runs on your machine and bridges Claude to your Deephaven session.

Configure Claude Desktop

Open Claude Desktop's configuration file. On macOS it lives at ~/Library/Application Support/Claude/claude_desktop_config.json. Add this block:

Create deephaven_mcp.json and point it at your running Deephaven session. Use the PSK token from your Deephaven startup logs (Docker) or your server configuration (pip):

If you started Deephaven with anonymous auth or via pip, set "auth_type": "Anonymous" and omit auth_token. Full configuration options are in the MCP setup guide.

Restart Claude Desktop after saving the config. You'll know the connection is active when Deephaven tools appear in Claude's interface.

Asking questions

With Deephaven holding the data and Claude connected to it, you can type questions instead of writing queries. Claude reads the table schema, figures out how to express the question as a Deephaven operation, executes it, and returns the result.

The conversations below show what the interaction looks like. The player names and numbers are illustrative — your results will reflect the real Statcast data you loaded.

"Who throws the fastest fastball in the AL East?"

You: Who throws the fastest fastball in the AL East?

Claude: Looking at

pitches_with_teamsfor four-seam fastballs in the AL East...

Result:

| player_name | max_velo |

|---|---|

| Félix Bautista | 101.8 |

| Aroldis Chapman | 100.4 |

| Gerrit Cole | 100.2 |

Félix Bautista tops the list at 101.8 mph. Claude mapped "fastest fastball" to max release_speed on pitch_type = 'FF', and "AL East" to a division filter on the joined table — that join setup from Part 2 is doing real work here.

"Show me pitchers whose spin rate dropped this month."

You: Show me pitchers whose spin rate dropped significantly in the last month.

Claude: Comparing average spin rates — last 30 days versus the 30 days before...

Result:

| player_name | recent_spin | prior_spin | change_pct |

|---|---|---|---|

| Corbin Burnes | 2,412 | 2,589 | -6.8% |

| Dylan Cease | 2,198 | 2,341 | -6.1% |

That's a multi-step query — time windows, per-pitcher aggregations, percentage calculations — expressed as a single sentence.

"Which hitters struggle against high sliders?"

You: Which hitters struggle most against high sliders?

Claude: Filtering for sliders above the zone (plate_z > 2.5), then calculating whiff rate and batting average...

Result:

| batter_name | pitches_seen | whiff_rate | ba |

|---|---|---|---|

| Joey Gallo | 47 | 52.3% | .043 |

| Kyle Schwarber | 38 | 48.7% | .079 |

"Compare Ohtani's exit velocity this year vs last year"

You: Compare Ohtani's exit velocity in 2026 versus 2025.

Claude: Pulling Shohei Ohtani's batted ball data for both seasons...

Result:

| Season | Avg exit velo | Max exit velo | Hard-hit % |

|---|---|---|---|

| 2025 | 93.2 | 118.4 | 54.2% |

| 2026 | 94.1 | 119.1 | 56.8% |

Exit velocity is up slightly, with hard-hit rate climbing 2.6 points year over year.

The agent writes queries you'd spend ten minutes constructing — and explains what the numbers mean.

Replaying a real game

The queries above work on any historical dataset. The more interesting case is asking those same questions while a game is still happening — before you know how the at-bat ends.

The easiest way to test that without waiting for a live game is to replay real historical data pitch by pitch. Game 1 of the 2024 World Series has a useful ending: Dodgers trailing 3–2 in the tenth, bases loaded, Freddie Freeman at the plate. Load it and sort into game order:

Then stream it into a ticking table using DynamicTableWriter:

live_game is now a ticking table. Pitches arrive in game order while the thread runs, and every downstream query updates automatically as new rows land. Ask Claude mid-replay:

You: What's Gerrit Cole's pitch mix and average velocity so far?

Claude: Filtering

live_gamefor Cole's pitches and grouping by type...

Result:

| pitch_type | n | avg_velo |

|---|---|---|

| FF | 45 | 96.7 |

| SL | 17 | 89.9 |

| KC | 11 | 83.9 |

| FC | 9 | 92.5 |

| CH | 4 | 89.7 |

| SI | 2 | 96.8 |

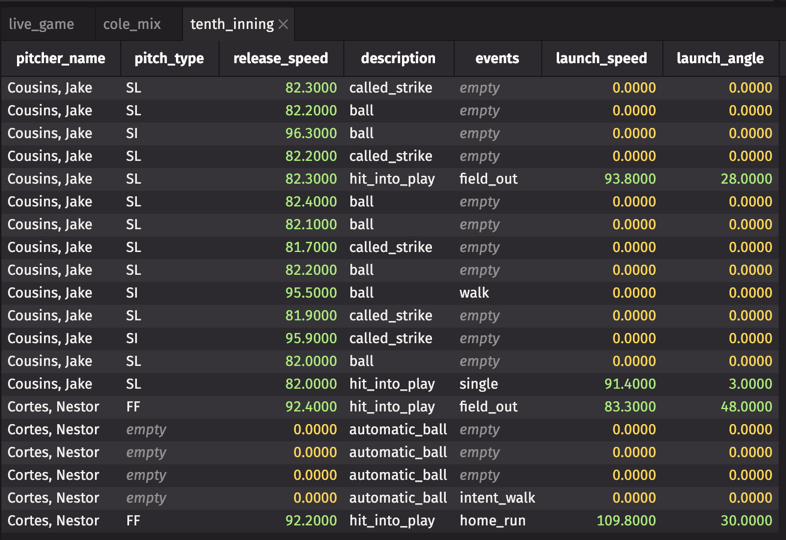

Run the same query five minutes later and the counts are higher — live_game keeps growing as pitches arrive. Ask about the tenth inning once it loads:

You: What happened in the bottom of the tenth?

Claude: Filtering

live_gamefor inning 10, bottom half...

Result:

The last row is the one you came for: Nestor Cortes, 92.2 mph fastball, 109.8 mph off the bat at 30° — Freeman's grand slam, in actual Statcast data.

Going live during game time

The replay above uses the same architecture as a live feed. The only difference is the source: instead of replaying a sorted DataFrame row by row, you pull from a real-time API, a Kafka topic, or any other streaming source and write rows into the same DynamicTableWriter as they arrive.

For polling-based sources, function_generated_table wraps any fetch function into a ticking table on a schedule:

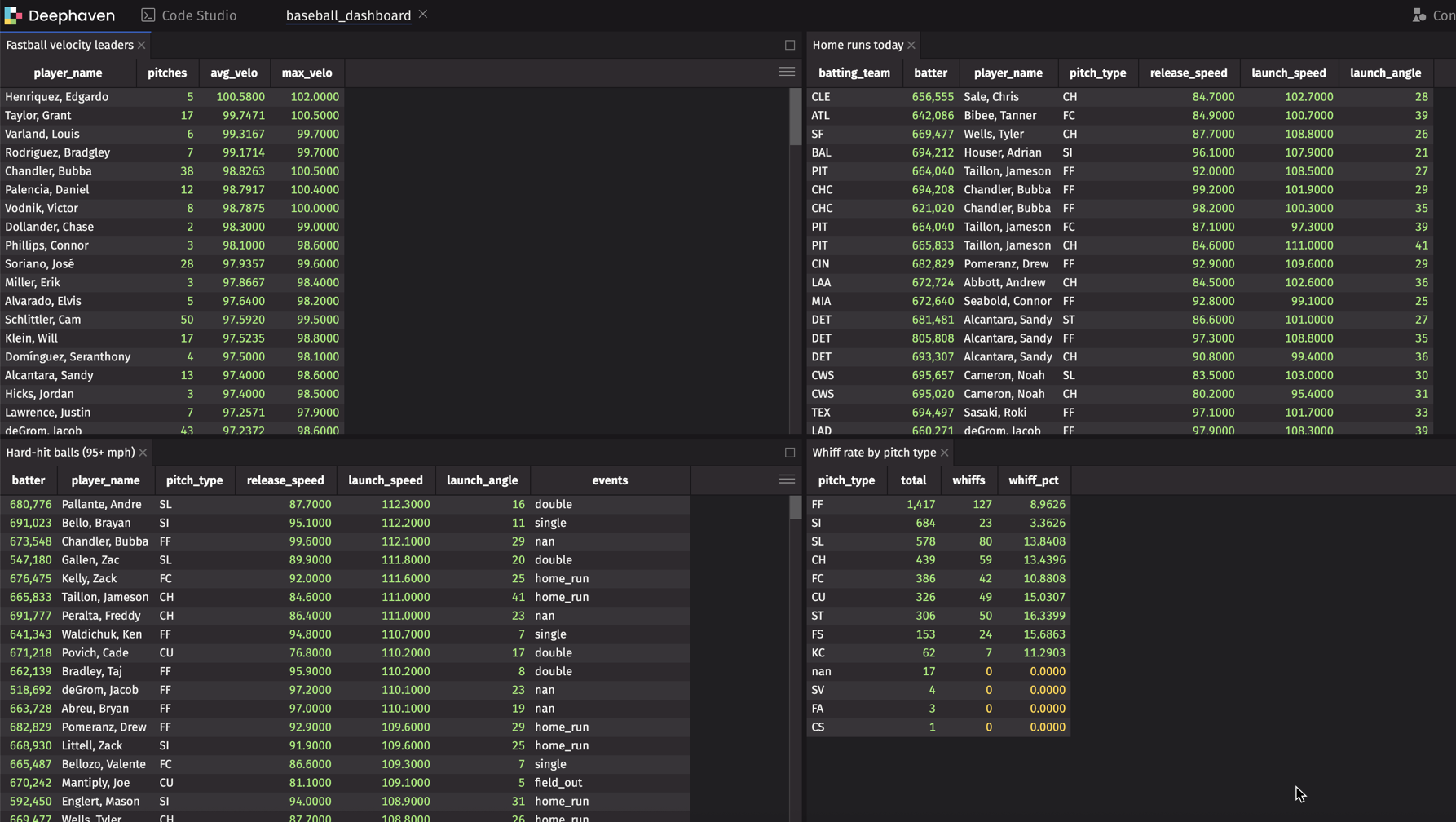

Every table built from live_pitches updates automatically on each refresh. Wire them into a deephaven.ui dashboard to get a live scoreboard:

All four panels update every five minutes without rerunning anything. Claude can query any of the underlying tables the same way it queried the replay — the architecture is identical.

Note

Deephaven connects natively to Kafka, Redpanda, and other streaming sources. See the Kafka integration guide for how to swap in a real-time feed.

Try it yourself

- Try it in the browser — no install required.

- Install Deephaven — free, takes about five minutes.

- Connect Claude — point your AI at your Deephaven session.

- Load Statcast data — Part 2 covers season-level setup; the replay section covers loading a specific game.

- Start asking — one question in plain English, no queries to write.

The same pattern works for any sport with structured data — NBA shot tracking, NFL play-by-play, soccer event data. If you can get it into a table, you can query it in plain English.

Questions? Join us on Slack.