Share tables between workers (Groovy)

Deephaven Core+ workers offer two different ways to share tables between them: URIs and Remote Tables. Calling ResolveTools.resolve(), subscribe, or snapshot copies the table data into the local worker's memory, and the result is a standard Deephaven table on which you can perform any operation.

A primary benefit of this approach is shared computation: a PQ that performs an expensive calculation can publish the result once, and multiple downstream workers can subscribe to it instead of each repeating the calculation independently. You can further reduce the data transferred and held locally by requesting only a subset of columns.

This guide covers how to share tables across workers in Deephaven using both methods. It describes their differences and the options available to control behavior when ingesting tables from remote workers. This guide presents everything from the standpoint of the worker that will ingest one or more tables from a different worker, as no additional configuration is needed on the worker that is serving the tables other than creating them.

When connecting to a remote table, Deephaven workers gracefully handle the upstream table state so that it ticks in lockstep with their own update graphs. Both the URI and remote table APIs provide reliable remote access to tables as well as graceful handling of disconnection and reconnection.

Note

For sharing tables between Legacy workers, see Preemptive Tables.

Data model and memory behavior

The following describes when data is transferred and how subscriptions affect memory on each worker.

Create a builder (forLocalCluster or forRemoteCluster): No data is transferred. The builder holds only connection parameters. Data transfer begins when you call subscribe or snapshot.

Subscribe (ResolveTools.resolve() or subscribe): Deephaven streams the full current state of the upstream table into the consuming worker's memory, then delivers incremental updates as the upstream table changes. The consuming worker holds the entire table — or the columns you request — in memory for the lifetime of the subscription.

Snapshot (ResolveTools.resolve() with snapshot=true or snapshot): Deephaven copies the current state of the upstream table into the consuming worker's memory as a static, non-updating table.

On the producing worker: Active subscriptions do not cause the producer to hold additional copies of the data in memory. However, maintaining subscriptions adds CPU and network overhead, as the producer serializes and sends incremental updates to each subscriber.

On the consuming worker: The full table (or the requested columns) is held in memory. The table data is not shared between the producer and consumer — each holds its own copy. For large or wide tables, use column filtering via SubscriptionOptions or the columns= URI parameter to limit what is transferred and stored locally.

Operations on subscribed tables: After subscribe or snapshot, the result is a standard Deephaven table. You can chain any table operation — where, update, updateView, join, aggBy, and so on. These operations run locally on the consuming worker and do not transfer additional data from the producer.

Where the performance benefit comes from: The benefit is shared computation, not reduced data storage. A PQ performs an expensive calculation once; multiple downstream workers subscribe to the result and receive only the final output rather than each repeating the calculation independently. Compared to older preemptive table approaches, consumers also no longer need to fetch each intermediate result when chaining operations — the consuming worker receives the upstream table once and operates on it locally.

URIs

You can share data between Core+ workers through Uniform Resource Identifiers (URIs). A URI is a sequence of characters that identifies a particular resource on a network. In Deephaven, you can connect to remote tables served by a Persistent Query (PQ) using a URI. For instance, consider a PQ called SomePQ with serial number 1234567890. This PQ produces a table called someTable. The URI for this table is either of:

pq://SomePQ/scope/someTablepq://1234567890/scope/someTable

The two URI schemes behave the same; however, the name of a PQ can be changed while its serial number typically cannot. Based on your use case, you may prefer one over the other.

Core+ workers can subscribe to both URI schemes using the ResolveTools package. The workers must be running to fetch the results. The following code block pulls someTable from SomePQ using both the name and serial number of the PQ:

Important

When you subscribe to a remote table (without snapshot=true), the table is initially empty. It becomes populated after the initial snapshot arrives on the next update cycle.

If you resolve a subscribed table and immediately operate on its data in the same script block, you will see empty results:

To work with the actual data, either:

-

In a notebook: Run the

resolvecall in one cell, then operate on the table in a subsequent cell. -

In a script: Use

awaitUpdate(timeoutMillis)to wait for the next update cycle. SinceawaitUpdatereturns on any update notification (even if the table is still empty), check the table state in a loop if you expect rows:

Snapshots (snapshot=true) return fully populated data immediately and do not require awaitUpdate().

Optional URI parameters

URIs can include encoded parameters that control the behavior of the resultant table. These parameters come after the table name in the URI, separated by the ? character. Multiple parameters are separated by the & character:

pq://SomePQ/scope/someTable?parameter=value&otherParameter=value1,value2

All of the following examples connect to someTable from SomePQ.

To get a static snapshot of the upstream table, use the snapshot option. The following example gets a static snapshot of the upstream table:

To get only a subset of columns in the upstream table, use the columns option. The columns should be in a comma-separated list. The following example gets only Col1, Col2, and Col3:

You can control the behavior of the table when the upstream table disconnects for any reason with onDisconnect. There are two different options for this:

clear: This is the default option. It removes all rows on disconnect.retain: This option retains all rows at the time of disconnection.

The following example retains all rows in the event of a disconnection:

You can also control for how long Deephaven will try to reconnect to the upstream table after a disconnection with retryWindowMillis. If this parameter is not specified, the default window is 5 minutes. The following example sets the retry window to 30 seconds (30,000 milliseconds):

You can tell Deephaven how many times it can retry connecting to the upstream table within the retryWindowMillis window with maxRetries. If not specified, the default value is unlimited. The following example retries up to 3 times within the default 5-minute window:

You can also tell Deephaven to connect to the upstream table as a blink table with blink. The following example connects to the upstream table as a blink table:

You can combine any number of these parameters as you'd like. The following example combines columns, retryWindowMillis, maxRetries, and blink:

If referencing a PQ by name, the name must be URL-encoded. This is important when the name has special characters such as spaces or slashes. Deephaven recommends using java.net.URLEncoder.encode to escape values.

For example, consider a PQ named Sla/sh PQ\n! that produces someTable. The URI for this table is:

URIs from a legacy worker

Legacy workers have their own Java Resolve Tools library to connect to a table served by a Persistent Query. Otherwise, the flow is the same.

Note

Legacy workers do not support additional URI-encoded parameters or automatic reconnections when subscribing to Core+ workers.

Remote tables

Deephaven offers a remote table API that allows you to connect to tables in PQs on Core+ workers from a Legacy or Core+ worker. Remote tables, like URIs, connect to tables in a PQ and track updates from a different, remote worker. The remote table API allows you to connect to upstream tables in both your local Deephaven cluster as well as remote ones.

Local cluster

You can use the forLocalCluster method to create a RemoteTableBuilder that can connect to a table in a PQ on the same Deephaven cluster as the worker that is running the code.

Remote cluster

You can use the function forRemoteCluster to connect to a table in a PQ on a different Deephaven Enterprise cluster. Unlike forLocalCluster, it returns a RemoteTableBuilder.Unauthenticated object that must be authenticated before you can subscribe. You must provide the URL of the remote cluster to connect to.

Note

The default port is 8000 for Deephaven installations with Envoy, and 8123 for installations without Envoy.

URL format

When passing a URL string directly to forRemoteCluster, the URL must end with /iris/connection.json:

Authentication

Connecting to a remote Enterprise cluster requires authentication. There are two approaches:

Option 1: Authenticate on the builder

The RemoteTableBuilder.Unauthenticated object returned by forRemoteCluster supports password and privateKey authentication methods:

Option 2: Use a DeephavenClusterConnection

For more control over the connection, including delegateToken authentication, create a DeephavenClusterConnection and pass it to forRemoteCluster:

The DeephavenClusterConnection class supports the following authentication methods:

-

password(user, password)— Authenticate with username and password. -

privateKey(keyFile)— Authenticate with a private key file. -

delegateToken(token)— Authenticate with a delegate token. The argument must be anAuthTokenobject, not a string. In a Groovy script running on a worker, obtain one from the local authentication manager:

Remote table builder

forLocalCluster returns a RemoteTableBuilder directly. forRemoteCluster returns a RemoteTableBuilder.Unauthenticated that must be authenticated first. Once authenticated, both return a RemoteTableBuilder with the following methods.

Subscribe

The subscribe method connects to the remote PQ and creates a subscription to the indicated table name to keep the table updated locally as it may change.

The result is a standard Deephaven table. You can perform any table operations on it:

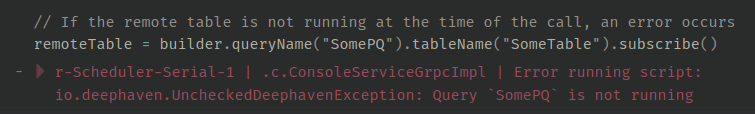

The remote PQ must be running at the moment subscribe is invoked. If the optional table definition is not specified, the operation will fail with an error:

You can pass a table definition to the builder to avoid this error. In this case, subscribe will succeed even if the remote PQ is down when it is invoked. Data will not show up (the table will look empty). The remote table will then update if and when the remote PQ becomes available and provides data for it.

Tip

For tables with complex schemas, creating a TableDefinition object by adding ColumnDefinition objects one-by-one can be cumbersome. The TableDefinition Javadoc lists several static factory methods that can simplify this process.

While a locally defined remote table is updating normally, the remote PQ providing the data for its subscription may go down. In this case, the table will stop updating but will not fail. Instead, the table will look empty as if it has no rows. If the remote PQ comes back up and resumes providing updates, the locally defined remote table will resume updating with content according to the table in the remote PQ.

Note

The locally defined remote table will reflect the same data being published by the restarted instance of the remote PQ. A restarting PQ may not "resume" on the state before crashing for a table published by a previous instance of the PQ; the restarted PQ may instead reset that table to its beginning state. Understand the expected restarting behavior of the remote PQ to control your expectations for the contents of the local remote table.

You can optionally tell Deephaven to subscribe to a remote table as a blink table, in which only rows from the current update cycle are kept in memory:

Snapshot

Calling snapshot creates a local, static snapshot of the table's content in the remote PQ at the time of the call.

Caution

If the remote PQ is not running at the time of the snapshot call, it will fail with an error. In this case, it makes no difference if a table definition was provided to the builder.

Once the snapshot data is obtained from the remote PQ, it will not change, and the local table will not be affected if the remote PQ goes down since the local table is static.

Subset of columns

Both subscribe and snapshot accept a SubscriptionOptions argument, which limits the columns from the original table that will be present locally.

Pre-subscription filtering

By default, subscribe transfers the entire upstream table to the consuming worker. For large tables, you can reduce memory usage by filtering rows on the producer side before they are sent. Use the addFilters method on SubscriptionOptions.Builder to specify row filters that run on the producing worker:

Tip

Pre-subscription filtering is especially useful for memory-constrained consumers. The filter runs on the producing worker, so only matching rows are transferred and stored locally — reducing both network bandwidth and local heap usage.

The filter syntax is the same as the where clause. Multiple filters are combined with AND logic. You can combine addFilters with addIncludedColumns to limit both rows and columns:

Consult the API documentation for SubscriptionOptions to see other options that can be set using this class.

Limitations and considerations

When working with remote tables, it is important to be aware of the following:

-

Memory usage: The consuming worker holds the full subscribed table in memory. Unlike viewport tables, which can defer memory usage depending on the query pattern, there is no deferral for subscribed tables. See Data model and memory behavior for a full discussion, including effects on the producing worker.

-

Message size: Remote tables in Core+ do not have the same payload size limitations as Legacy preemptive tables (which were limited by Java NIO). However, there is a maximum gRPC message size. The system automatically fragments large messages into multiple record batches. As long as a single row can fit within the maximum message size, the transfer will succeed without any special configuration.

-

Logging: Log entries related to remote tables are prefixed with

Connection-aware remote table. This can be useful for auditing connections and disconnections.